Programming requires thinking. You must place instructions correctly, with a logical order, and you must prove that your program will produce the right behaviour. Even doing tests, your programs can fail, so you can use code hardening or similar techniques to avoid errors. The inclusion of a program verification is engaging, but it is limited to prove a limited set of features, even if that verification is made formally, you will need to make remarkably different types of tests to check the program correctness. So, the main problem with programming is reaching correctness, and how do you need to be concentrated to avoid programming mistakes, like passing null pointers to some function, because a program can compile, but cannot work.

You have programming paradigms, or programming models, where you have different forms to face a programming problem. Where not all problems can be solved using computers, because you have several complexity levels, and also you have several algorithmic complexity levels. You have some people stating that you cannot solve every programming problem using only one paradigm, and probably you should use different paradigms to solve different problems, and you have each paradigm exponent stating that using his beloved programming paradigm is better than using other paradigms, and even language exponents will tell you that their programming language is better than others.

What does the actually happens is the fact that the market leads almost every tendency on programming language usage. If you aim to work on a company with enterprise features, you should expect that the company will use a programming language with enterprise level support. Then you have enterprises using Java and NET, with few of them using JVM driven languages like Scala, or some languages like F#. And then you have companies using programming languages where you do not need to think too much — as they think that happens — like Visual Basic NET, because is cheaper to train programmers in a language which aims to be easy to use, rather than training experts in a programming language that is hard to learn. Tell me if I lie, “every corporate environment exposed to the internet has at least one bug appearing each time that you operate that environment”. Does actually works that working scheme of using programming languages that aim to be easy to use, productive and easy to learn?. Personally I do not see any difference. Everyone can learn programming, but not everyone should program, and the problem is not specifically the experience. I know programmers with more than ten years of experience doing the same mistakes for many years.

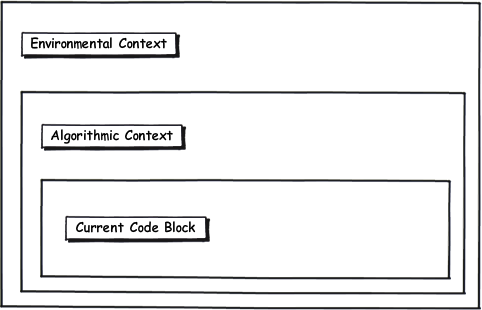

Programming requires to be concentrated, to have a pretty clear idea of what happens in the code, not specifically with one line of code, you must have the perspective of the current block, its algorithmic context and its environmental context. So, when you are programming you are using almost every feature of your memory. If you have not developed any aspect of your memory, probably is more feasible that your code will be more buggy. Logical thinking is required too, not forgetting that how is applied that thinking, the current block of code, its algorithmic context and its environmental context. Can you create a new thread in a web server?

I have worked with almost every programming paradigm, with languages like Java, C/C++, Haskell, Lisp, Python, Scala, JavaScript and Coffee Script among a large list of programming languages and domain specific languages. On each one, I never knew a skilled programmer that is not concentrated in his work, without distractions. A skilled programmer keeps his mind on the code, no matter its environment. Probably is harder to get concentrate on certain environments, but I never knew a programmer that cannot put his attention to the code. I must recognize that working as freelancer have enhanced my skills, I have almost no distractions, and I am currently more concentrated in my work. The quality of my code has increased; I was able to study new languages like Haskell, Scala, Coffee Script among others and I have learned their theoretical basis. Nobody is doing annoying interruptions of my work, and it is certainly flowing. Just imagine how productive I am without annoying interruptions.

I am doing it well. I have the top points on each job related social network, where the top is 5.0 I have 4.99, where the top is 10.0 I have 9.89, with more than 10 jobs on each one, with extremely gratifying recommendations, and I have worked with highly interdisciplinary teams, delivering exceptionally high quality products. I am doing a terrific job, and I will continue doing it because I have the ability to program and the ability to learn.